Vocabulary and English for Specific Purposes Research - Averil Coxhead 2018

Using multiple measures to identify specialised vocabulary

Approaches to identifying specialised vocabulary for ESP

Kwary (2011) proposes a hybrid approach to identifying specialised vocabulary using three methods. The first step is keyword analysis using corpora, chosen because it creates quickly an automatic list of possible target items. The next step involves systematic classifications using the keywords to help identify multi-word units in the corpus. This step is based on lexicographical methods for developing technical dictionaries, and includes subject-field classification, either using a library-based system or by examining specialised texts in the field and can include consultation with subject specialists. The third step involves text analysis to investigate aspects of texts such as abbreviations and symbols which have technical meanings in context.

In a search to find a statistical method to identify specialised vocabulary, Chujo and Utiyama (2006) used a section of the British National Corpus (BNC) of Commerce and Finance texts. This corpus of over seven million running words was analysed using seven different statistical measures as Chujo and Utiyama (2006) extracted vocabulary relating to beginner, intermediate and advanced learners. Examples at each of these levels can be seen in Table 2.4, along with the method of extraction.

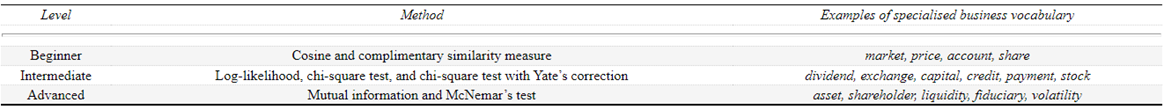

Table 2.4 Levels, methods and examples from Chujo and Utiyama (2006, pp. 261—262)

Chujo and Utiyama (2006) note that the methods used for beginner level extractions brought to surface high frequency words, including quite a high proportion of function words. The average frequency of the words at beginner, intermediate and advanced levels dropped sharply from 58,517 as the average frequency for the Cosine method (beginner) to 134 for the McNemar method (advanced). The length of the words increased from beginner to advanced, as can be seen in Table 2.4. The top-500 items from each type of analysis were then investigated to find out more about their occurrences in the BNC frequency lists (Nation, 2006) and textbooks, as well as analysing their word length and use in a native speaker corpus.